|

|

|

|

by Yvette Depaepe

Published the 8th of May 2026

To all mothers around the world — this is for you.

May your love be seen, your strength honored, and your hearts forever surrounded by flowers of gratitude.

Motherhood is rarely still. It moves, bends, stretches like light across a room that never quite lands the same way twice. And yet, in photography, we try to hold it. To frame the fleeting. To give permanence to something that is, by nature, always changing.

Photography has long been a language of memory, and mothers often exist at its center—sometimes visible, often behind the lens. Many of us carry albums shaped by a mother’s gaze: birthdays, scraped knees, first days of school, the ordinary made meaningful simply because it was seen, cared for, remembered.

And yet, how often is she missing from the frame?

This Mother’s Day, perhaps the most powerful act is not just to photograph mothers, but to see them. Not as archetypes of care or symbols of strength, but as individuals—complex, evolving, human. Capture the laughter lines, the quiet pauses, the moments of solitude. Let the camera acknowledge not only what they give, but who they are.

In contemporary photography, there is a growing movement toward this honesty. Artists are turning inward, documenting motherhood not as an ideal, but as an experience—raw, tender, imperfect. These images resonate because they dismantle the myth of perfection and replace it with something far more compelling: authenticity.

This Mother’s Day, perhaps the most meaningful images are not the ones we plan, but the ones we allow. A moment of stillness. A glance. A pause between movements. The kind of photograph that doesn’t announce itself, but stays. Because long after the day has passed, what remains is not just what was seen—but who was there, holding it all together, just beyond the edge of the frame.

But you don’t need a gallery or a project to participate in this shift. It begins simply. A photograph taken with intention. A moment noticed instead of overlooked. A willingness to include the mother—not just as the observer, but as the subject. Because one day, these images will become anchors. Proof of presence. Of love that existed not in grand gestures, but in quiet, persistent ways.

So this Mother’s Day, pick up your camera—or your phone—and look again.

Not for perfection, but for truth.

Not for the extraordinary, but for the deeply familiar.

And press the shutter.

Mums, you are the Women Who Hold the World Together,

keeping it stable and providing a foundation for all that we have.

‘laundry’ by asit

‘Love’ by Martin Krystynek MQEP

‘MOTHER WITH HER SON’ by Juan Luis Duran

‘Motherhood. Now’ by Svetlana Melik-Nubarova

‘Family day’ by Samanta Krivec

‘Mother’s jump’ by Marc Apers

‘Mothers protection’ by Tatyana Tomsickova

‘Sometimes the power of motherhood is greater than the laws of nature’ by Martin Lee

‘Forever in my heart’ by Vito Guarino

‘This is the Miracle of Life’ by Yvette Depaepe

‘Just a seagull pulling my girls’ by John Wilhelm

‘Mom’s All Mine: Donna & Dalina’ by Andre du Plessis FRPS

‘Mom?’ by Heiko Riekers

‘Three generations’ by Jean-Louis VIRETTI

‘generations’ by Robert

‘The Look of a Mother’ by Ray Clark

‘385’ by Antonio Grambone

‘Yes, that’s my mother’ by João Coelho

‘30 / 30’ by Holger Droste

'Shopping with Mum' by Anita Meezen

‘Etsy Mum’ by Tanya Love

| Write |

| Sunil Kulkarni PRO What an outstanding collection and on perfectly timed for Mothers Day coming soon. Yvette and all photographers Kudos to you. Love it. |

| Eric Chatelain Photography PRO A superb collection of true masterpieces – thank you for sharing these wonders, and well done to the selected photographers! |

| Yvette Depaepe CREW Thank you, dear Eric ... |

| Yinghui Dan PRO Excellent collections and full filled of love! Happy Mother’s Days! |

| Yvette Depaepe CREW Thanks, Yinghui Dan ... It is so nice to spread love via the magazine ;-) |

| Ying Zhang PRO very nice pictures! Happy Mother s day! |

| Yvette Depaepe CREW Thanks, Ying Zhang ... same to you and your family! |

| Martin Krystynek MQEP CREW Thank you so much for selection

|

| Yvette Depaepe CREW My pleasure, dear Martin!!! |

| Jealousy PRO Thank you for this beautiful selection of photographs and words, dear Yvette. At this moment, this page is the most peaceful and love-filled place in the world. |

| Yvette Depaepe CREW Oh what a heartwarming and touching comment, dear Jealousy ... Thank you so much. ♥♥♥ |

| Miro Susta CREW Lovely mother's day contribution, well done Yvette, thank you 😊 🙏 😀 |

| Yvette Depaepe CREW Thank you, Miro ... |

| Jane Lyons CREW Really wonderful, Yvette. The selection of photographs is so poignant ..just brilliant! Thanks |

| Yvette Depaepe CREW Thanks a lot, dear Jane ... Happy Mother's Day to you (I don't know if you celebrate it now over there) but ♥♥♥ |

| Colin Dixon CREW Wonderful words and stunning selection of pictures to go with them. |

| Yvette Depaepe CREW Thank you so much, Colin ... ♥ |

by Yvette Depaepe

Published the 6th of May 2026

'Connection and interaction in photography'

Photography lives in the space between observer and subject. True images are born not from looking, but from connection - from the quiet exchange of presence, trust and awareness. Thanks for the heartwarming and precious submissions.

The winners with the most votes are:

1st place : Roberto Corinaldesi

2nd place: Hilda van der Lee

3rd place : Louie Luo

Congratulations to the winners and honourable mentions.

Thanks to all the participants in the contest 'Connection and interaction in photography'

The currently running theme is 'Tall versus Small'

The contrast between tall and small in photography is a powerful visual tool that expresses more than physical size — it communicates emotion, hierarchy, vulnerability, and strength. By manipulating proportion and perspective, photographers can guide how we feel about a scene.

This contest will end on Tuesday the 19th of May 2026 in the afternoon.

The sooner you upload your submission the more chance you have to gather the most votes.

If you haven't uploaded your photo yet, click here.

You can see the names of the TOP 50 here.

|

| Miro Susta CREW Most interesting subject, excellent photos, congratulations to Roberto and other mentioned participants, thank you Yvette for excellent work. |

| Nichole Chen PRO Congratulations to all for your outstanding works. |

| congratulations Roberto on your first place. Thanks to those who voted for my photo. The hands are the hands of my mother, 95 years old, 3 months before she died. Here she is holding the newborn of her friend. I think one can understand how proud i am to are second in this contest. ALthough i would be even more proud if the photo was on the first place ❤ |

by Yvette Depaepe

Published the 4th of May 2026

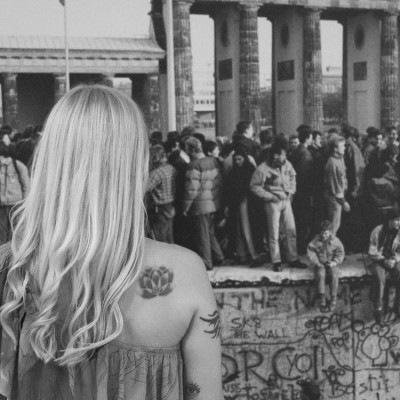

This months' featured exhibition is titled 'Street as Space and Muse' by Marius Cinteza

Marius introduces his outstanding exhibition to us as follows:

"An old man strolls through his thoughts, a dog waits for its owner left behind outside the frame, an elderly lady hurries home, a child seems forgotten in a carousel seat, a silhouette appears frozen in the middle of a bridge in the morning, a traveler savors a coffee enveloped in cigarette smoke on a train platform, a little girl seems lost in a maze-like space... I don't know if all these fragments of time existed for others in the same way; for me, they actually form a personal journal, the album of my journey through this fascinating and inspiring space."

I invite you to explore the street as a space for inspiration, just as Marius did.

This exhibition which will be exposed on our opening page / Gallery throughout May 2026.

Click here to see the entire exhibition: [978] Street as Space and Muse by Marius Cinteza

To trigger your curiosity, here is a short selection of images ...

'Multiple me'

'A walk of wisdom'

'apart'

|

| Ying Zhang PRO Congratulations ! deep thinking pictures! I like it very much |

| Gabriela Pantu PRO Such a delight this exhibition, dear Marius, a strong and deep insight, great visual language, street photography with the fine art aura.Love it!Thank you, as always, dear Yvette!<3<3 |

| Eiji Yamamoto PRO Dear Marius, thank you so much for the wonderful and inspiring exhibition! Dear Yvette, thank you so much for introducing this exhibition to us! |

| Inci Koyuncu PRO çok beğendim... tebrikler |

| Congrats, Marius! |

| Marc Apers CREW Love your work Marius, congrats! |

| Magda Fulger PRO Felicitari!

|

| Kevin Christonar PRO fantastic! |

| Dosa Ionel Eugen PRO Great body of work. Congrats Marius. |

| Marius Surleac PRO wonderful! |

by Editor Jane Lyons

Edited and published by Yvette Depaepe, the 1st of May 2026

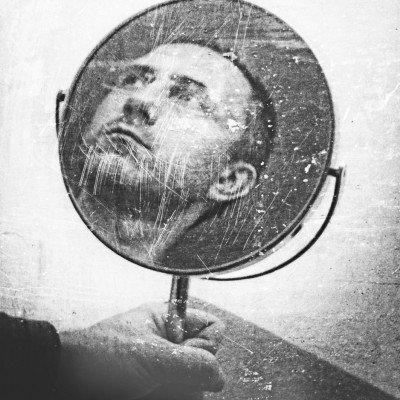

“of men and machines”

As we all know, art is subjective.

If ten people were asked to select their twenty favourite photographs from Adrian Donoghue’s extensive portfolio, the results would undoubtedly be very different. This edit is not meant to be definitive. It is simply a reading of Adrian's body of work through one set of eyes.

Adrian Donoghue is a photographer whose instantly recognizable work is cinematic, atmospheric and steeped in narrative. His images unfold like frames from an unseen film, brimming with mystery, intrigue and subtle drama. Drawing heavily on the visual language of film noir, he constructs urban scenes that are both elegant and unsettling, where tension lurks beneath a veneer of sophistication.

Set largely against the backdrop of Melbourne, Australia, his photographs transform familiar city streets into stages for psychological storytelling. Rain-slicked pavements, muted colour schemes and carefully controlled lighting create a world that feels suspended in time. His compositions are meticulously designed, often employing strong geometry and leading lines that draw the viewer further into the narrative. A recurring feature of his work is the lone figure positioned at the edge of the foreground.

A defining element of Adrian’s work is his own presence within it. Frequently dressed in a dark overcoat, black fedora and signature umbrella, he appears not just once, but often multiple times within the same frame. These repeated incarnations suggest layered identities and inner dialogues, inviting viewers to consider themes of self, memory and duality. This device is both theatrical and deeply psychological and is perhaps informed by his parallel career as a clinical psychologist.

“is there anybody out there?”

“Colour my world”

“Rainy Day in London 2”

“The chase”

“After midnight”

“The Italian job”

“In lonely cities live lonely men 7”

“Letters”

“The monolith”

In recent years, Adrian's visual storytelling has evolved to encompass his granddaughter, Matilda, who has become a significant presence in his work. Her presence introduces subtle shifts in tone, with moments of tenderness and connection emerging within his otherwise controlled and enigmatic world. This evolving dynamic adds emotional depth to his work while maintaining the integrity of his established style.

“Undercover 3”

“Against the tide”

“incident at the old clock tower”

“The babysitter 2”

“Where’s Matilda”?

Post-processing plays a crucial role in shaping his aesthetic. His images possess a painterly quality, achieved through careful compositing, which elevates them beyond straightforward photography. Yet despite this stylization, his work never loses its sense of realism. The result is a body of work that feels both cinematic and timeless — crafted, yet never artificial.

Adrian Donoghue is distinguished not only by his technical mastery, but also by his unwavering commitment to a singular vision. His style has remained remarkably consistent throughout his career, which is a testament to his clear artistic identity. His sophisticated and genteel images are often laced with irony and understated humor, rewarding viewers who linger and look beyond the surface. His early work and references to the painter Edward Hopper, is wonderful.

“Running man 3”

“The readers”

“Lonely men build lonely cities 2”

“to lay with friends”

“The lifeguard”

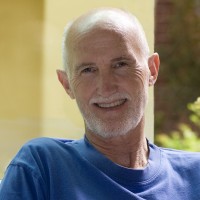

Since joining 1x, Adrian has built an impressive record, with over 500 awarded images—a reflection of both his productivity and the enduring appeal of his work. Each photograph stands as a carefully constructed story, yet together they form a cohesive and compelling body of work that continues to captivate audiences. Adrian Donoghue does not simply capture moments—he creates worlds.

Your work is inspiring Adrian. Thank you for being a part of the 1x community (almost 18 years) and for sharing your work with us.

|

| Ying Zhang PRO Works of art endure for eternity. |

| Miro Susta CREW Wonderful photo creation, accept my congratulations Adrian and many thanks to Jane and Yvette for publishing it |

| Adrian Donoghue PRO thanks Miro |

| Yaping Zhang PRO 非常感谢大师阿德里安分享赏心悦目,感人至深的创意艺术作品!感谢您!恭喜您! |

| Adrian Donoghue PRO many thanks |

| Adrian Donoghue PRO Many thanks Jane! I've been away for a few days and missed this, what a nice surprise, much appreciated!

|

| Jane Lyons CREW Your portfolio is incredible Adrian. It was impossible to select 20 favorites. It was Yvette who suggested that I focus on you and I am so happy that I did. |

| Yvette Depaepe CREW Adrian, ever since I joined 1x, I have admired your creativity and superb work. I really enjoyed Matilda appearing in your wonderful interactive photographic work. I saw your granddaughter growing up to be a lovely girl, and I could see the bond between the two of you in every single piece of work. I'm glad and proud that Jane has put you in the spotlight in this magazine article. Cheers to a great artist! Yvette. |

| Adrian Donoghue PRO thanks Yvette! you always have kind words |

| Offer Ellbogen PRO Remarkable and fascinating images............well done dear Adrian..........Congratulations !!! |

| Adrian Donoghue PRO thanks Offer! |

| Thierry Dufour PRO Stunning work, congrats Adrian. Splendid article !!! |

| Adrian Donoghue PRO thank you Thierry |

| Eiji Yamamoto PRO Dear Adrian, thank you so much for the wonderful and great photos! Very creative and emotional! Thank you so much dear Jane and dear Yvette! It's very inspiring! |

| Adrian Donoghue PRO thanks Eiji |

| Heike Willers PRO Thank you for sharing the beautiful work from a wonderful photgrapher! Love his work! |

| Adrian Donoghue PRO thanks Heike |

| Wonderful article on a wonderful photographer! |

| Adrian Donoghue PRO thanks Christine |

| Michel Romaggi CREW I really like your work, Adrian. This article once again highlights all your creative talent. Congratulations and thank you to Jane. |

| Adrian Donoghue PRO thanks Michel |

| Fiorenzo Carozzi PRO An extraordinary artist with unique and immediately recognizable photographic narratives. I have followed his photography for many years, and each time it is a stunning surprise. Thank you for publishing this magnificent portfolio. |

| Adrian Donoghue PRO thanks Florenzo |

| excellent collection of fascinating photographic art, I am a fan since a long time. Thanks also to Jane for the article |

| Adrian Donoghue PRO thanks Hans-Wolfgang |

| Wayne Pearson PRO I have followed Adrians creative and inspirational work for many years, and he never ceases to amaze me with his wonderful and unique style, capturing and expressing his version of the world around us. Thank you Adrian for allowing me to constantly realise that there is more than meets the (average humans) eye. |

| Adrian Donoghue PRO Hi Wayne, thanks! |

| Peter Hammer PRO A well deserved article Adrian. |

| Adrian Donoghue PRO Hi Peter, many thanks |

| Caterina Carrara PRO Complimenti un lavoro unico, bellissimo e veramente interessante |

| Adrian Donoghue PRO thanks Caterina |

| Andreas Agazzi PRO Your work is simply unique and fascinating, keep it up Adrian! |

| Adrian Donoghue PRO thanks Andreas! |

| Inspirational work indeed! Thank you for the feature and introducing me to Adrian’s work.

|

| Adrian Donoghue PRO thanks Elaine |

| Alberto Fasani PRO my favourite photographer in the 1x community! |

| Adrian Donoghue PRO thanks Alberto |

| Dazhi Cen PRO Excellent work and learn a lot. |

| Adrian Donoghue PRO thanks Dazhi |

| Awesome body of work Adrian. I always look forward to seeing your images. Best regards, Patrick |

| Adrian Donoghue PRO thank you Patrick |

| Izabella Végh PRO Aspettavo da tempo questo articolo. Un fotografo veramente oltre tanto talento, ma una fantasia eccezionale. Congratulazioni! |

| Adrian Donoghue PRO many thanks Izabella |

| I completely agree with the article. I've been following your work for years and I want to express my admiration and congratulations on your original creations. Congratulations, Adrian! |

| Adrian Donoghue PRO thanks Jois ! |

| I've been following Adrian's work for years and I find it fascinating. My congratulations once again. |

| Adrian Donoghue PRO many thanks Eduardo |

by Editor Lourens Durand

Edited and published by Yvette Depaepe, the 28th of April 2026

‘Wood Anemone’ by Mandy Disher

My wife and I were fortunate to be able to join a bus tour of the annual wildflower spectacle in the fields of the Western Cape of South Africa. This extravaganza of nature normally takes place in August/September, depending on the region’s winter rainfall, and typically showcases field upon field of red, yellow, orange and blue carpets of flowers.

What a experience!

But to capture the full glory and emotional impact of this annual wildflower spectacle on film is not easy.

Apart from the crowds of fellow travellers all over the scene, the sheer size of the spectacle cannot be depicted without it being just a mass of colour, without an outstanding point of interest.

I guess all photographers face the same problem in any display of nature anywhere in the world, so the problem is not unique, but here are some tips that I found useful.

· One approach is to step back and walk around, away from the group to look for:

o isolated flowers in a small group

o leading lines

o points of interest like a farmhouse, farm implement, a lonely tree or a windmill on one of the thirds that can act as an anchor

· Experiment with lighting:

o side lighting, back or front lighting to give different effects

o the golden hour is the best, but not always possible

o even cloudy days, though, with the clouds acting as a diffuser can give good soft light

· Go in close to capture individual flowers

· Try shooting upwards from below to show the underside of a flower, with a backdrop of clouds in the sky

· Include a person on the picture, to add fun or emotion

· Look for patterns

· Use a polarizing filter to reduce reflections and enhance colours

As far as camera settings are concerned:

o Use a small aperture (f/8 to f/16) for greater depth of field, keeping more of the flower in focus.

o Shutter Speed: as far as possible, use 1/125s or faster for handheld shots to avoid motion blur.

o ISO: also keep as low as you can (100-400) to reduce noise

o Where possible, focus stacking can have a good effect on close-ups

o White Balance: Set to prevailing conditions or auto

Hopefully these tips will be of some use to you as a starting point at least.

Here is a selection of wildflower photographs taken by 1X.com photographers for your inspiration and enjoyment.

‘So Long for This Moment’ by Marc Adamus

‘Walking in Tuscany’ by Paolo Lazzarotti

‘Making Haste’ by Ryan Dyar

‘Sunstorm’ by Ryan Dyar

‘Revelation’ by Ryan Dyar

‘Himalayan Blue Poppy’ by Ruiqing P.

‘Calla Lily world’ by Gerald Macua

‘Springtime Rush’ by Patrick Marson Ong

‘Carpet of Wildflowers’ by Mei Xu

‘Dreaming Beauty’ by Henrik Spranz

‘Tiny garden in a summer field’ by Ludmila Shumilova

‘In chorus’ by Roberto Marini

‘Afternoon’ by Csaba Tokolyi

‘Among the daffodils’ by Ales Krivec

‘red & white’ by Hilda van der Lee

‘On the edge of the cliff’ by Jorge Ruiz Dueso

‘Our short beauty’ by Ylva Sjörgen

‘Bloomdido’ by Abdulkhalek Bakir

‘Poppies in the fog’ by Sergio Barboni

‘Rainbow over Blue Columbine in Colorado Valley’ by Mei Xu

|

| Ying Zhang PRO Stunning, enjoy! |

| Yaping Zhang PRO 非常感谢您Lourens分享美妙绝伦,繁花似锦的在照片!非常喜欢 |

| Eiji Yamamoto PRO Dear Lourens, thank you so much for this wonderful article with beautiful and great photos! The breathtaking beauty of spring. Dear Yvette, thank you so much as always! |

| Lourens Durand CREW Thank you, Eiji. |

| Kathryn King PRO Wonderful wild flower photos! |

| Sunil Kulkarni PRO Excellent collection of wild flowers - Lourens, you did a fantastic job making this collection - kudos to you and the Photographers also Thanks for the tips. Love it. |

| Lourens Durand CREW Thank you, Sunil. |

| Mei Xu PRO Beautiful collection and useful tips! Thank Lourens and Yvette for including my photos in this stunning article. |

| Lourens Durand CREW Thank you, Mei. |

| Nichole Chen PRO What a beautiful collection of wild flowers — many thanks to Yvette and the team for putting this together. |

| Lourens Durand CREW Thank you. |

| Ramiz Sahin PRO Good work!!!

|

| Lourens Durand CREW Thanks. |

| It is magnificent indeed! |

| This is a real feast to the eyes, my sincere congratulations to all the featured photographers for their outstanding and inspiring work! |

| Such a wonderful collection of spring flowers |